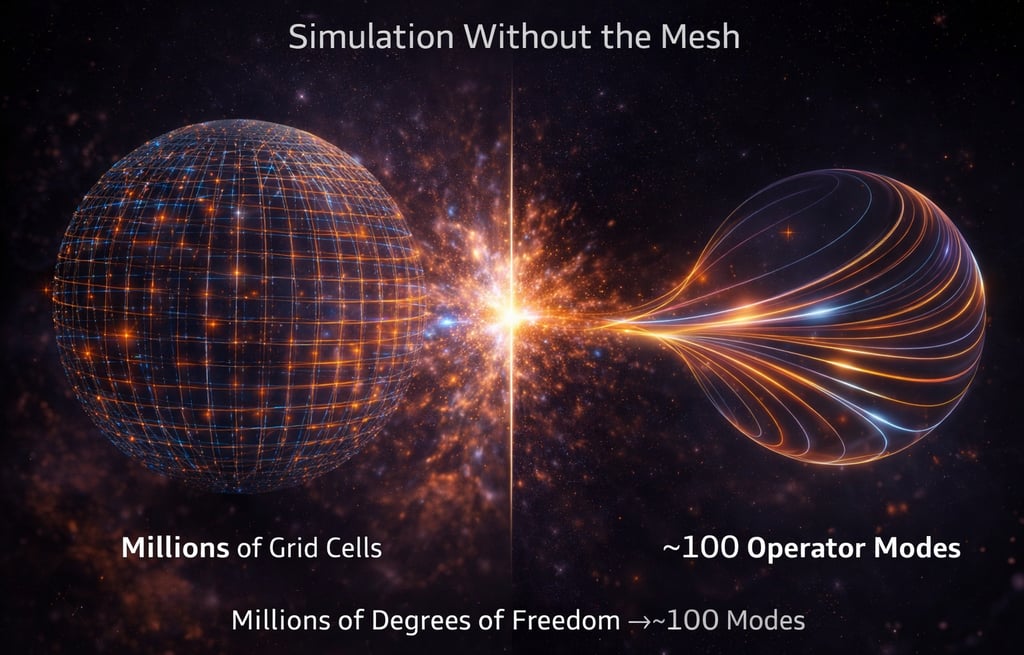

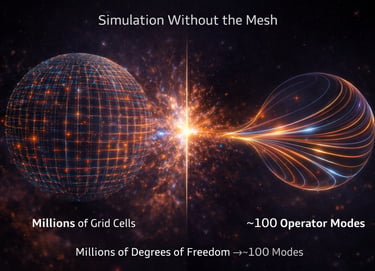

Simulation Without the Mesh

Deterministic, Operator-Based Simulation — From Millions of Grid Cells to ~100 Modes

We started as a statement against the sloppy professional standards that dominated the field of construction services 20 years ago. We wanted to set a new, high standard and work as consultants, solving our client's problems.

The company quickly grew and cemented itself as the new golden standard in commercial construction. Today we continue to build on that legacy and strive for excellence in everything we do.

Traditional simulation frameworks—CFD, FEA, and their variants—approximate reality by discretizing space into millions or billions of grid cells, then numerically solving governing equations across each element. This approach assumes that fidelity comes from resolution: more cells, more accuracy. In practice, however, much of this dimensionality is redundant. The system’s true behavior is not distributed uniformly across all degrees of freedom; instead, it is concentrated in a small number of dominant modes. What engineers interpret as “complexity” or “noise” is often the byproduct of representing structured dynamics in an over-resolved coordinate system. The mesh is not revealing physics—it is obscuring it.

Our framework reverses this paradigm. Rather than starting from discretization, we begin by identifying the operator that governs the system and extracting its spectral structure directly. Through modal decomposition and operator learning, we observe that real physical systems—across structures, thermal fields, and even quantum hardware—collapse onto low-dimensional manifolds. In many cases, 2–3 modes capture over 95–99% of system behavior. This is not an approximation imposed for efficiency; it is an empirical property of the system itself. By evolving these modes through a learned operator, we simulate the system’s dynamics directly—without resolving every point in space. The result is a reduction from millions of grid cells to on the order of ~100 meaningful degrees of freedom.

This fundamentally redefines simulation. Instead of solving equations over space, we evolve structure over modes. Instead of iterative convergence loops, prediction emerges directly from the operator’s spectrum. Instead of introducing turbulence models or stochastic assumptions to explain residuals, we resolve them as interactions between modes. In this sense, simulation transitions from approximation to representation. We are no longer asking, “How finely must we discretize to approximate reality?” but rather, “What is the minimal structure that fully describes it?” This shift aligns simulation with the underlying mathematics of motion—operator theory, Sturm–Liouville structure, and spectral evolution—rather than with numerical convenience.

For industry, the implications are immediate and profound. Engineering teams can replace days or weeks of simulation time with near-instant evaluation, enabling real-time design iteration and feasibility checks. Compute costs drop dramatically, removing dependence on large HPC clusters. More importantly, insight improves: instead of sifting through massive field outputs, engineers work directly with the modes that govern behavior—making systems easier to understand, optimize, and control. Whether in telecom towers, data centers, aerospace structures, or manufacturing processes, this translates into faster development cycles, reduced over-engineering, and the ability to predict and mitigate failure modes before they emerge. In general, by removing degrees of freedom by orders of magnitude, we transform simulations that traditionally take weeks into results delivered in minutes.

A key distinction in our approach is that we are not compressing simulation—we are uncovering its native structure. Traditional reduced-order models attempt to approximate a high-dimensional system after it has already been simulated. In contrast, our framework identifies that the system itself never truly occupied that high-dimensional space to begin with. The dominant modes are not artifacts of compression; they are the natural coordinates of the system’s behavior. This shift—from approximation to discovery—changes how engineers interpret both data and physics.

Another important consequence is interpretability. In conventional simulation, engineers are often left analyzing massive field outputs—velocity fields, pressure distributions, thermal gradients—without a clear understanding of which components actually govern system behavior. By operating directly in modal space, we expose the few degrees of freedom that matter. This enables engineers to reason about systems in terms of cause and effect, rather than post-processing vast datasets. The result is not just faster simulation, but clearer engineering insight.

Our framework also changes how variability and uncertainty are handled. In traditional methods, unexplained residuals are often attributed to noise and handled through turbulence models or stochastic corrections. In our work, these residuals are not discarded—they are examined as structured interactions between modes. What appears random in a grid-based representation frequently resolves into coherent behavior when expressed in the correct basis. This allows systems to be modeled with greater fidelity without introducing additional complexity.

Finally, this approach enables a fundamentally different relationship between simulation and real-world data. Because the operator is learned directly from observed system behavior, simulation becomes naturally aligned with empirical reality. Models are no longer purely theoretical constructs that require calibration—they evolve alongside the system itself. This creates the foundation for continuously updating, real-time digital twins that remain accurate as conditions change, rather than degrading over time.

Traditional digital twin platforms—whether from Siemens, ANSYS, Dassault Systèmes, or Ansys Twin Builder—are built on top of the same foundational paradigm: mesh-based simulation, reduced-order approximations, and data overlays. While these platforms are powerful, they fundamentally rely on discretizing physics into high-dimensional representations and then attempting to simplify or calibrate them after the fact. As a result, what is called a “digital twin” is often a combination of simulation outputs, statistical models, and sensor dashboards—useful, but not truly reflective of how the system evolves.

Even more modern approaches—AI-driven twins, surrogate models, and reduced-order modeling techniques—do not resolve this limitation. They compress or approximate the output of simulation, rather than identifying the structure that governs it. These methods improve speed, but they remain dependent on the same underlying assumptions: that system complexity must be approximated, that variability is stochastic, and that accuracy requires either repeated simulation or continuous retraining. The result is a twin that must be maintained, recalibrated, and often revalidated as conditions change.

Our framework departs from this entirely. By identifying the governing operator and its dominant modes directly from data, we do not approximate the system—we represent it in its natural coordinates. The digital twin is not a reduced version of a high-dimensional model, nor is it a statistical surrogate. It is the system’s intrinsic structure, evolving in time. This allows the twin to remain stable, interpretable, and predictive without relying on repeated simulation loops or external correction layers.

This enables something no existing platform delivers: a truly living digital twin. Because the operator captures how the system evolves, new data does not require rebuilding the model—it refines the structure. The twin stays aligned with reality as conditions change, preserving accuracy over time rather than degrading. Instead of a static model that must be managed, the twin becomes a continuously evolving representation of the system itself.

For industry, this distinction is decisive. Engineering teams no longer need to choose between accuracy and speed, or between simulation and real-time insight. With a structurally grounded digital twin, systems can be monitored, predicted, and optimized continuously—without re-meshing, re-solving, or retraining. This transforms the digital twin from a visualization or analytics tool into a true operational asset—one that directly informs design, control, and decision-making at every stage of the system lifecycle.

Scientific Validation

From infrastructure to quantum systems, our results demonstrate that structure—not randomness—governs real-world behavior.

This paper presents a fundamentally different way of interpreting complex physical systems by analyzing real superconducting qubit calibration data through an operator-theoretic lens. Rather than assuming that variability in quantum systems is inherently stochastic, the study constructs a physically meaningful state space and applies modal decomposition to reveal the system’s intrinsic structure. The key finding is that what appears to be a six-dimensional system in measurement space collapses onto a low-dimensional manifold, with over 96% of variance captured by just two modes and nearly all behavior by three . This demonstrates that the system’s true degrees of freedom are far fewer than traditionally assumed, and that its behavior is governed by a constrained set of dominant modes rather than diffuse randomness.

Building on this structural insight, the paper introduces an operator-based evolution model to test whether this low-dimensional structure governs system dynamics over time. By learning a linear operator that maps the system forward, the study shows a 37.7% reduction in prediction error compared to a persistence baseline. This is a critical result: in systems dominated by noise, simple baselines are typically difficult to outperform. The improvement demonstrates that qubit behavior is not memoryless but follows a structured, partially predictable trajectory. In effect, the system retains information about its own evolution, indicating that what is commonly treated as “noise” actually contains embedded structure when viewed in the correct representation.

For science and mathematics, this work bridges classical spectral theory and modern data-driven analysis in a meaningful way. It extends concepts from Sturm–Liouville theory and operator theory—traditionally applied to idealized systems—into real, noisy physical environments. The findings support a broader hypothesis: that apparent randomness is often not fundamental, but emerges from observing structured dynamics in an incomplete or misaligned basis. This reframes how motion, variability, and even time evolution are understood, suggesting that systems evolve along constrained manifolds defined by underlying operators, rather than through purely stochastic processes.

For industry and simulation, the implications are immediate and transformative. If complex systems—from quantum hardware to thermal and structural systems—are intrinsically low-dimensional, then the need for high-dimensional, mesh-based simulation is fundamentally reduced. Instead of modeling millions or billions of degrees of freedom, systems can be represented and evolved through a small number of dominant modes. This shifts simulation from brute-force approximation to structure-driven prediction, enabling faster computation, greater interpretability, and real-time decision-making. In this sense, the paper is not just a study of quantum systems—it is a proof point for a new paradigm of simulation itself.

This whitepaper establishes a fundamentally new interpretation of structural dynamics by analyzing wind-driven tower systems through an operator-based spectral framework rather than traditional mesh-based simulation. Using high-fidelity OpenFAST data, the study demonstrates that tower behavior—commonly treated as stochastic vibration—exhibits strong spectral concentration and low-dimensional structure. Instead of energy being distributed across a broad range of frequencies, the system is dominated by a small number of coherent modes, with a primary structural frequency near 0.35 Hz and a secondary forcing scale near 0.05 Hz . This immediately challenges the long-standing assumption that residual vibration is inherently random.

Building on this observation, the paper shows that the system’s apparent high-dimensional complexity collapses onto a remarkably low-dimensional manifold. Through modal decomposition, it is demonstrated that over 97% of the system’s energy is captured by a single dominant mode, and over 99.5% by just two to three modes. This result is critical: it proves that the true degrees of freedom governing tower dynamics are orders of magnitude smaller than those implied by mesh-based simulation. What appears complex in time-domain signals is revealed to be structured oscillation within a tightly constrained spectral space.

The work then advances from structure to prediction by constructing a reduced operator directly from the data. This operator governs the evolution of the system within its low-dimensional modal space and consistently outperforms baseline persistence models in predicting future behavior. Importantly, this predictive capability emerges without introducing stochastic models, filtering techniques, or external forcing assumptions. Instead, prediction is shown to be an intrinsic consequence of the operator’s spectral structure, reinforcing the central thesis that system dynamics are governed by deterministic modal interactions rather than random perturbations.

For engineering, simulation, and industry, the implications are profound. The study demonstrates that traditional mesh-driven approaches—requiring millions of degrees of freedom—are not fundamentally necessary to capture system behavior. By identifying and evolving the governing modes directly, simulation can be reduced to a compact, interpretable, and computationally efficient framework. This represents a shift from approximation to representation: from resolving every local interaction to understanding the global structure that governs motion. More broadly, the whitepaper provides strong empirical evidence for a unifying principle—that across physical systems, what is perceived as noise is often unresolved structure, and that true understanding comes from identifying the operator that defines it.

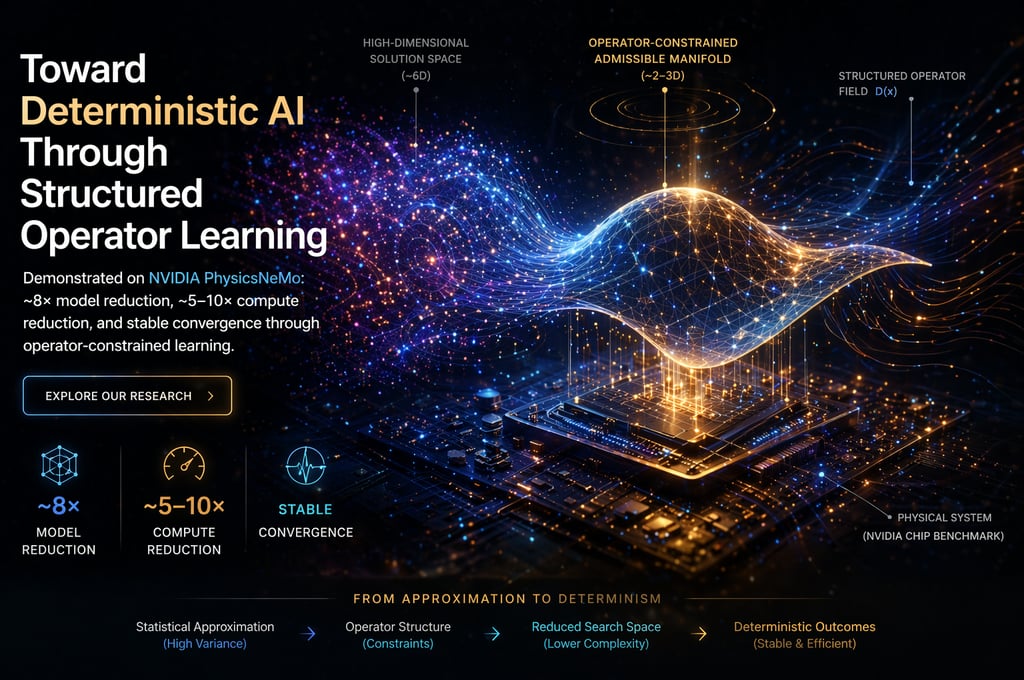

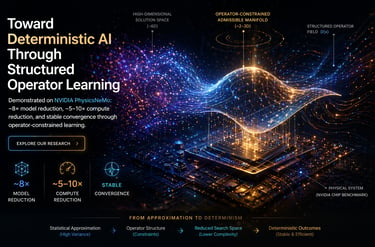

Modern AI systems, particularly in physics-based domains, rely heavily on statistical approximation to recover underlying behavior from data. While effective, this approach introduces significant computational overhead, instability, and an inherent dependence on large-scale models to capture structure that is not explicitly encoded. Our work represents a shift in this paradigm. Rather than learning structure implicitly, we introduce it directly into the governing operator, allowing systems to evolve within a constrained, admissible manifold from the outset.

In collaboration with NVIDIA’s PhysicsNeMo benchmark, we demonstrated that introducing structured operator constraints reduces the dimensionality of the problem before optimization begins. The result is a system that requires substantially fewer degrees of freedom to reach the same solution. As shown in our validation, model capacity was reduced by approximately 8×, while maintaining baseline accuracy (~0.0374), with an accompanying 5–10× reduction in effective compute per iteration . These results are not the consequence of tuning or architectural changes, but of modifying how motion is mathematically represented.

The key insight is that much of the computational burden in modern AI systems arises not from the complexity of the physics itself, but from the absence of structure in its representation. When the governing operator does not encode admissibility, the system must explore a high-dimensional solution space and correct errors iteratively through gradient updates. By embedding structure directly into the operator, this search space contracts, convergence becomes more stable, and computational effort is more efficiently allocated. This transition—from unconstrained approximation to structured evolution—is visible in the smooth, well-conditioned convergence behavior across all successful configurations.

This direction points toward a broader concept we refer to as deterministic AI. Not in the sense of eliminating learning, but in reducing reliance on stochastic approximation by encoding the constraints of motion directly into the system. In this regime, models do not simply approximate outcomes—they evolve along structured pathways defined by the underlying physics. As demonstrated in our work, even partial introduction of this structure produces measurable gains in efficiency, stability, and fidelity across complex multi-physics systems.

Looking forward, this framework establishes a path for integrating structured operator learning into modern compute stacks, including CUDA-accelerated simulation environments. By shifting complexity from the optimization layer into the mathematical formulation itself, we enable more scalable, efficient, and robust systems—capable of operating on reduced admissible manifolds while maintaining full compatibility with existing infrastructure. This is not a replacement of current AI methods, but a refinement of them, bringing us closer to a future where intelligent systems compute outcomes with greater structure, efficiency, and determinism.

This whitepaper presents the first continent-scale empirical reconstruction of a SpaceX Falcon 9 re-entry fragment using multi-station observations from the Global Meteor Network dataset. By combining time-resolved trajectory picks with precise astrometric calibration, we directly reconstruct the motion of a re-entering fragment across Europe with kilometer-scale accuracy. The result is a clear, data-driven view of re-entry dynamics—one that reveals a narrow, corridor-confined, and temporally ordered evolution rather than a diffuse or randomly dispersed event.

At the core of the paper is a new mathematical framework that reinterprets re-entry physics through structured operator dynamics. Instead of relying solely on computational fluid dynamics and stochastic fragmentation models, we treat motion as constrained by an underlying admissible manifold governed by geometry and state-dependent structure. Within this formulation, drag, deceleration, and fragmentation emerge not as independent random effects, but as the natural consequence of motion evolving through regions of increasing constraint. The empirical reconstruction aligns with this framework, demonstrating smooth deceleration, spatial confinement, and strong temporal coherence across a distributed sensor network.

This shift from approximation to structure has direct implications for aerospace engineering and operations. For SpaceX, it enables tighter prediction of re-entry trajectories, significantly reducing uncertainty zones and allowing for narrower, more accurate safety corridors. This translates into reduced airspace disruption, improved coordination with aviation systems, and lower operational costs. At the engineering level, the ability to recover consistent deceleration profiles and identify structured transition regimes provides a foundation for improved fragmentation modeling, optimized thermal protection strategies, and more efficient structural design.

More broadly, the paper introduces a new paradigm for modeling high-energy atmospheric motion—one in which deterministic structure replaces statistical uncertainty as the primary lens of interpretation. By grounding theory in real observational data, it demonstrates that re-entry behavior can be understood, predicted, and ultimately controlled with greater precision than previously assumed. This approach opens the door to more efficient simulation frameworks, better integration of observational validation, and a new generation of aerospace technologies built on structured, data-driven insight rather than probabilistic approximation.