The AstraNomos PRISM Simulation Engine

A physics-based digital twin platform that reveals hidden structure in physical systems

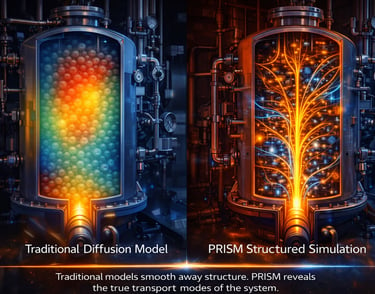

The AstraNomos PRISM Simulation Engine is a next-generation modeling framework for analyzing complex physical systems. Rather than relying solely on diffusion-based models or statistical approximations, PRISM uses structured spectral operators to reveal how energy, motion, and transport evolve within the geometry of a system. This allows AstraNomos digital twins to detect confinement, amplification, and transport modes that conventional models often interpret as noise or randomness.

For engineers, researchers, and industry partners, this technology translates into powerful simulation capability. The PRISM engine can model complex systems across domains such as energy infrastructure, aerospace systems, nuclear reactors, semiconductor cooling networks, plasma transport, and advanced manufacturing. By identifying the true transport structure within these systems, AstraNomos simulations help organizations predict system behavior, optimize performance, and detect hidden failure mechanisms before they emerge.

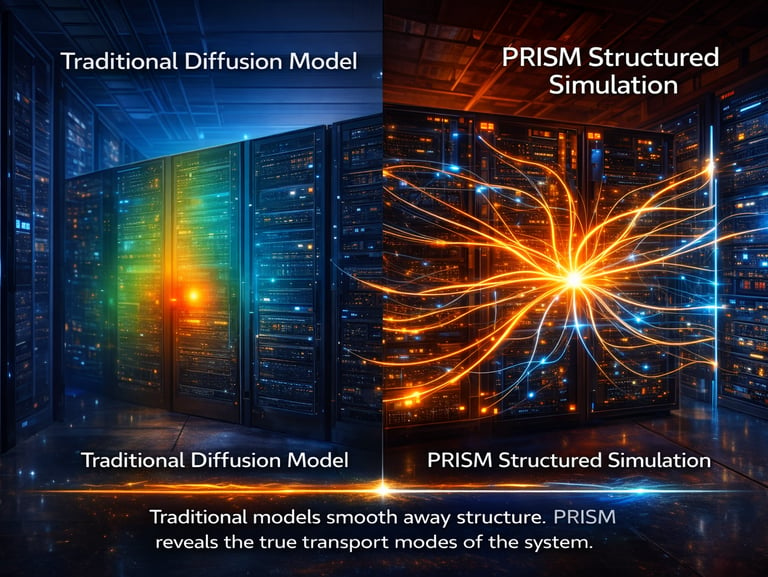

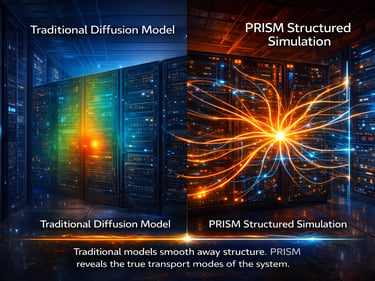

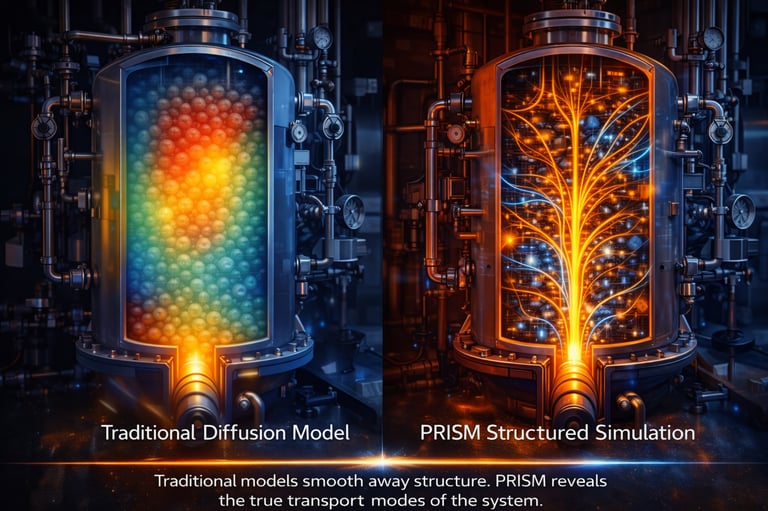

Traditional simulation tools assume that complex behavior is fundamentally stochastic and therefore smooth it away using diffusion approximations. PRISM takes a different approach: it identifies when apparent randomness actually reflects deeper geometric structure in the governing dynamics. By modeling these systems through our underlying operator spectrum, AstraNomos digital twins uncover transport modes that remain invisible to conventional models.

Scientific Foundations of the PRISM Engine

The AstraNomos PRISM Simulation Engine is built on a unified mathematical framework that changes how complex physical systems are simulated. Traditional engineering simulations—such as computational fluid dynamics (CFD) or heat transport modeling—solve governing equations across extremely large meshes. Engineers typically discretize a physical system into millions or billions of grid cells and numerically compute how energy, flow, or temperature evolves across that entire grid.

While this approach is accurate, it is computationally expensive because the solver must indirectly reconstruct the physical structure of the system through brute-force numerical resolution. Phenomena such as hotspots, recirculation zones, or confined transport pathways only emerge after large amounts of iterative computation and mesh refinement.

The PRISM framework approaches the problem differently. Instead of solving for every point in a large spatial mesh, the system is modeled through the natural transport modes of the physics itself. These modes arise from the spectral structure of the governing transport operator and represent the physically admissible pathways through which energy and motion evolve in the system.

In practical terms, this means the simulation can focus on the dominant transport modes rather than millions of independent mesh variables. Many real systems—such as turbine cooling flows, reactor heat transport, semiconductor thermal fields, or packed-bed reactors—are governed by a relatively small set of structured transport pathways. By identifying those modes directly, PRISM reduces the effective dimensionality of the simulation from millions of variables to hundreds of physically meaningful degrees of freedom.

For engineers, this dramatically reduces the computational burden of simulation by several orders of magnitude. Traditional CFD workflows typically require discretizing a system into millions or even billions of grid cells. In contrast, the PRISM framework identifies the dominant transport modes of the system directly, allowing the simulation to evolve along a reduced spectral basis of roughly 100–200 physically meaningful modes rather than millions of mesh variables.

Equally important, the framework offers a new interpretation of phenomena traditionally treated as stochastic. In many simulation pipelines, unresolved structure is approximated through statistical turbulence models or diffusion approximations. In the PRISM formulation, much of this apparent randomness corresponds to unresolved transport structure. Once the correct transport operator is defined, these behaviors emerge as deterministic transport modes rather than statistical noise.

The result is a new simulation paradigm that complements—and in some regimes may replace—traditional diffusion-based modeling approaches. By identifying the true transport basis of a system and evolving the dynamics within that structured space, the PRISM engine enables faster simulations while preserving the essential physics governing complex systems transport.

The PRISM framework has been tested across a wide range of experimental and industrial systems where classical transport models struggle to resolve localized behavior. These studies include structured packed-bed reactors, semiconductor thermal maps, turbine blade heat-transfer experiments, and data-center cooling environments. Across these domains, the same pattern emerges: hotspots, transport bottlenecks, and instability zones arise deterministically from geometry and load placement rather than from stochastic fluctuations.

In each system, PRISM models transport through its underlying operator structure rather than through brute-force spatial discretization. Classical simulation methods rely on dense computational meshes containing millions or billions of cells in order to approximate the governing equations of motion. By contrast, the PRISM framework resolves transport through a much smaller set of physically admissible modes derived from the system geometry itself.

This shift dramatically reduces simulation complexity. Problems that traditionally require millions or billions of mesh elements can instead be represented using 1–200 transport modes, allowing engineers to analyze energy transport pathways directly rather than approximating them through dense grids. In practice, this enables deterministic digital twins that reveal hotspots, bottlenecks, and instability regions while reducing the computational burden of simulation by several orders of magnitude.

Engineering Validation and The End of Mesh-Driven Simulation

The research shown above represents a fundamental shift in how complex physical systems can be simulated and optimized. Traditional engineering simulations—particularly in computational fluid dynamics (CFD), heat transfer, and transport modeling—rely on discretizing physical domains into millions or even billions of mesh cells. Each of these cells must be solved iteratively, which requires enormous computational resources, long run times, and expensive hardware infrastructure. The operator-based simulation framework presented here replaces that paradigm by modeling the system through a small set of dominant transport modes derived from spectral operator dynamics. Instead of solving billions of local equations, the system behavior can be captured with roughly 1–200 governing modes, dramatically reducing computational complexity.

For companies operating large engineering assets—such as data centers, power plants, semiconductor facilities, aerospace systems, or industrial manufacturing equipment—this reduction translates directly into orders-of-magnitude efficiency gains. Simulations that previously required hours, days, or large HPC clusters can now be executed in minutes on modest computing infrastructure. Faster simulation cycles enable engineers to test more design variations, evaluate operational scenarios in real time, and identify thermal or fluid dynamic risks long before they become costly failures. The economic impact is substantial: reduced cloud computing costs, faster product development timelines, improved asset reliability, and significantly lower energy and maintenance expenditures.

Beyond speed alone, the framework improves predictive clarity and decision-making. Mesh-based simulations often introduce numerical noise, grid sensitivity, and stochastic artifacts that obscure the underlying physics. By reducing the system to its dominant transport operators, the simulation becomes more deterministic and interpretable. This allows engineers and operators to focus on the true drivers of system behavior rather than artifacts of numerical discretization. For organizations deploying digital twins or predictive maintenance systems, the result is a simulation engine that is not only faster and cheaper, but also more reliable for guiding real-world operational decisions.

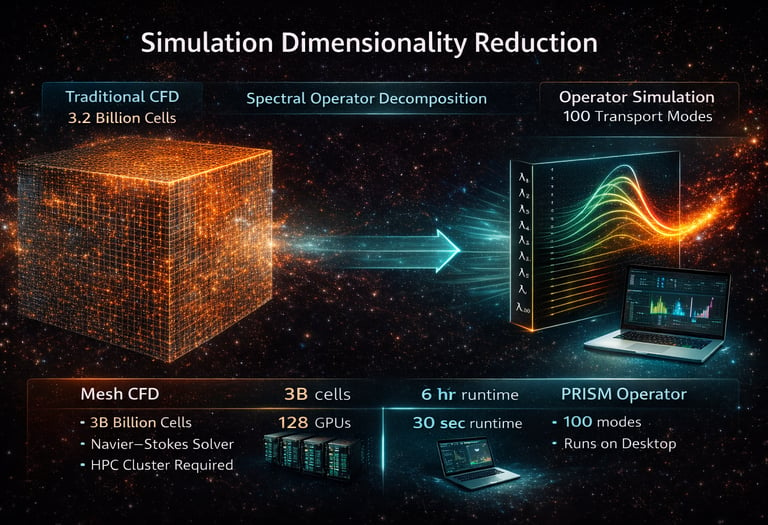

The image illustrates a fundamental shift in how complex physical systems can be simulated. On the left side, the cube represents a traditional computational fluid dynamics (CFD) model, where a physical domain is discretized into an extremely dense mesh containing billions of grid cells. Each cell represents a local approximation of the governing transport equations—typically derived from Fourier heat diffusion or the Navier–Stokes equations—and the solver must iteratively update the state of every cell to reconstruct the system dynamics. This approach can require millions to billions of degrees of freedom and extensive computational infrastructure. The arrow in the center represents a spectral operator decomposition, where the same physical system is analyzed through the eigenmodes of the governing transport operator rather than through brute-force spatial discretization. On the right side of the image, the system is represented by roughly 100 dominant transport modes, which capture the physically admissible pathways through which energy or momentum propagates. Instead of evolving billions of mesh variables, the simulation evolves only the modal amplitudes associated with these operator modes.

This dimensionality reduction has profound computational implications. In traditional CFD workflows, the computational cost scales roughly with the number of mesh cells multiplied by the number of solver iterations required for convergence. Industrial CFD models frequently contain 10⁶–10⁹ mesh cells, and a single high-fidelity simulation can require hours to days of runtime on clusters containing dozens to hundreds of GPUs or CPU nodes. By contrast, when the system is projected onto its spectral transport modes, the effective number of degrees of freedom can drop to ~10² modes, reducing the dimensionality of the problem by several orders of magnitude. In practical terms, simulations that previously required multi-node high-performance computing clusters can often be executed on a workstation or laptop because the solver evolves only the modal coefficients governing the dominant transport physics.

The economic implications for industries that rely heavily on simulation are substantial. Semiconductor companies such as NVIDIA, Intel, AMD, and TSMC collectively spend tens of billions of dollars annually on research and development, with a significant portion devoted to simulation infrastructure, multiphysics modeling, and high-performance compute resources used for thermal, fluid, and structural analysis. Even modest improvements in simulation efficiency can produce enormous savings when propagated across these workflows. For example, if a design team currently runs 10,000 CFD simulations per year, and each simulation requires an HPC cluster costing roughly $1,000–$10,000 in compute time, the annual compute expenditure can easily reach $10–$100 million for a single design program. Reducing simulation dimensionality by several orders of magnitude can shrink both runtime and hardware requirements dramatically, allowing engineers to run far more design iterations while using substantially less infrastructure.

Equally important is the improvement in predictive accuracy where it matters most. Real engineering systems—such as microchip thermal transport, turbine blade cooling, and reactor heat transfer—are dominated by localized structures like hotspots and transport channels. In silicon thermal datasets, curvature-aware transport models have demonstrated ~18–24% reductions in mean-squared error at hotspot boundaries and up to ~55–60% reductions in the most extreme high-curvature regions, while maintaining compatibility with classical diffusion behavior in smooth regimes. These improvements translate directly into economic value because localized prediction errors drive conservative guard-banding in engineering design. For semiconductor manufacturers shipping hundreds of millions of chips per year, even a 1–2% improvement in thermal prediction accuracy can enable tighter clocking margins, reduced throttling, improved performance binning, and lower cooling overhead—benefits that scale into tens or hundreds of millions of dollars annually when applied across large product lines.

In essence, the image communicates that the true opportunity is not merely faster simulation but structurally better physics with dramatically fewer computational degrees of freedom. By identifying the natural transport modes of a system rather than reconstructing them through dense meshes, the operator-based framework reduces computational complexity while improving the physical interpretability of simulation results. For industries already investing billions of dollars in HPC infrastructure and digital twin technologies, this approach provides a powerful leverage point: improving the governing physics itself allows every layer of the simulation ecosystem—from solvers and GPU acceleration to AI surrogates and digital twins—to operate on a more accurate and efficient representation of the underlying system.

The Future of Engineering Simulation

For more than half a century, advances in engineering simulation have been driven primarily by increases in computational power. Faster processors, larger clusters, and more sophisticated numerical solvers have allowed engineers to simulate increasingly complex systems. Yet the underlying paradigm has remained largely unchanged: reconstruct the physics of a system by discretizing it into millions or billions of mesh cells and iteratively solving the governing equations across that grid. This is intensive computation without understanding causality: enormous numerical effort devoted to fitting curves, rather than understanding the physical structure that generates them.

The AstraNomos PRISM simulation framework represents a different approach. Instead of reconstructing physical transport through brute-force spatial discretization, PRISM analyzes the spectral structure of the governing transport operator. The dominant behavior of many systems—thermal transport, fluid momentum, energy propagation—can often be represented through a small set of natural transport modes determined by the geometry and constraints of the system. In practical engineering environments, these modes typically number on the order of 1–200, even when equivalent CFD simulations require millions or billions of mesh cells. When we reduce the number of mesh cells—as we do by modifying the governing Sturm–Liouville operator—we are isolating the true structure of motion within a system. In this sense, the approach follows the same scientific progression that began with Galileo’s study of motion, continued through Kepler’s discovery of orbital laws, was formalized geometrically by Descartes, and ultimately culminated in Newton’s formulation of universal dynamics: replacing numerical description with the governing principles that produce it.

This shift from mesh reconstruction to operator mechanics dramatically reduces the computational complexity required to simulate complex systems. Simulations that previously demanded high-performance computing clusters and hours of runtime can often be reduced to compact spectral models that capture the same governing physics with far fewer degrees of freedom. For engineers, this means faster iteration cycles, more interpretable models, and earlier detection of critical system behavior such as hotspots, instability zones, and constrained transport pathways.

The implications extend far beyond computational efficiency. Because the operator framework reveals the underlying transport modes of a system directly, it enables a new generation of physics-driven digital twins capable of diagnosing and predicting system behavior in real time. Instead of merely approximating field values across dense numerical grids, simulations can evolve along the natural energy pathways of the system, providing clearer insight into how geometry, boundary conditions, and system constraints govern the evolution of complex processes.

For industries that rely heavily on simulation—including semiconductor design, aerospace engineering, energy infrastructure, advanced manufacturing, and large-scale computing systems—this approach offers a powerful economic advantage. By reducing the dimensionality of simulation problems while improving the interpretability of their results, operator-based modeling allows organizations to extract more value from their existing simulation investments while dramatically lowering computational overhead.

Therefore, the transition from mesh-based simulation to operator-based modeling represents more than a technical improvement. It reflects a deeper alignment between numerical simulation and the mathematical structure of physical law. As digital twins and predictive engineering systems become increasingly central to industrial decision-making, frameworks that evolve systems directly through their governing operators may define the next generation of simulation technology.

Explore Our Research

To learn more about the mathematical foundations and engineering validation behind the PRISM simulation engine, download our research papers or contact our team to discuss potential applications within your organization.